SplitMetrics client, Vezet, shares how they managed to improve Click-to-Install conversion rate for their Vezet ridesharing app with the help of app store A/B testing.

My name is Andrey and I am responsible for contextual advertising and ASO at Vezet. We provide on-demand ridesharing services in more than 120 cities of Russia. I’ve learned a lot about conversion rate optimization, and now I’d like to share my experience with you.

App Store Optimization is one of the most useful and cost-effective tools for increasing the number of app installs. And optimizing click-to-install (CTI) conversion rate is one of the main ASO aspects.

We conducted endless experiments to understand how exactly a particular app store page element would affect the CTI. We used Google Play Console for experimenting with our Android app and we used SplitMetrics for experimenting with our iOS app. SplitMetrics allows you to test different elements on a stand-alone web page that emulates a real App Store page.

Unlike Google Play, the App Store doesn’t let you test changes on organic traffic, but you can put a link from SplitMetrics to a smart banner on the site (which pops up at the top of the web page). We use Google Ads (Display Network) and Facebook Ads to promote our test page. We chose these advertising channels because they work with custom links well.The experiment ends when its results have statistical significance. You will understand that if you look at data in the platform or if you analyze your test data.

The testing system expects to get the right amount of data and distribute it significantly among all the options. But sometimes you have to wait extremely long for it to happen. This can become critical if you spend money on test traffic, or if one of the options is clearly worse, but the system does not see it yet, and you lose users in the meantime. In this case, it makes sense to look at the current data and decide to stop the experiment, choosing the most effective option. You also don’t have to wait for the end of the test in case of using seasonal creatives. In this case, the creative is temporary and is later replaced with the original version. But it should in any case work better than the original version.

By the way, SplitMetrics is able to redistribute traffic using the multi-armed bandit routing method: more traffic goes in favor of the winning option, helping to increase its significance for completing the experiment.

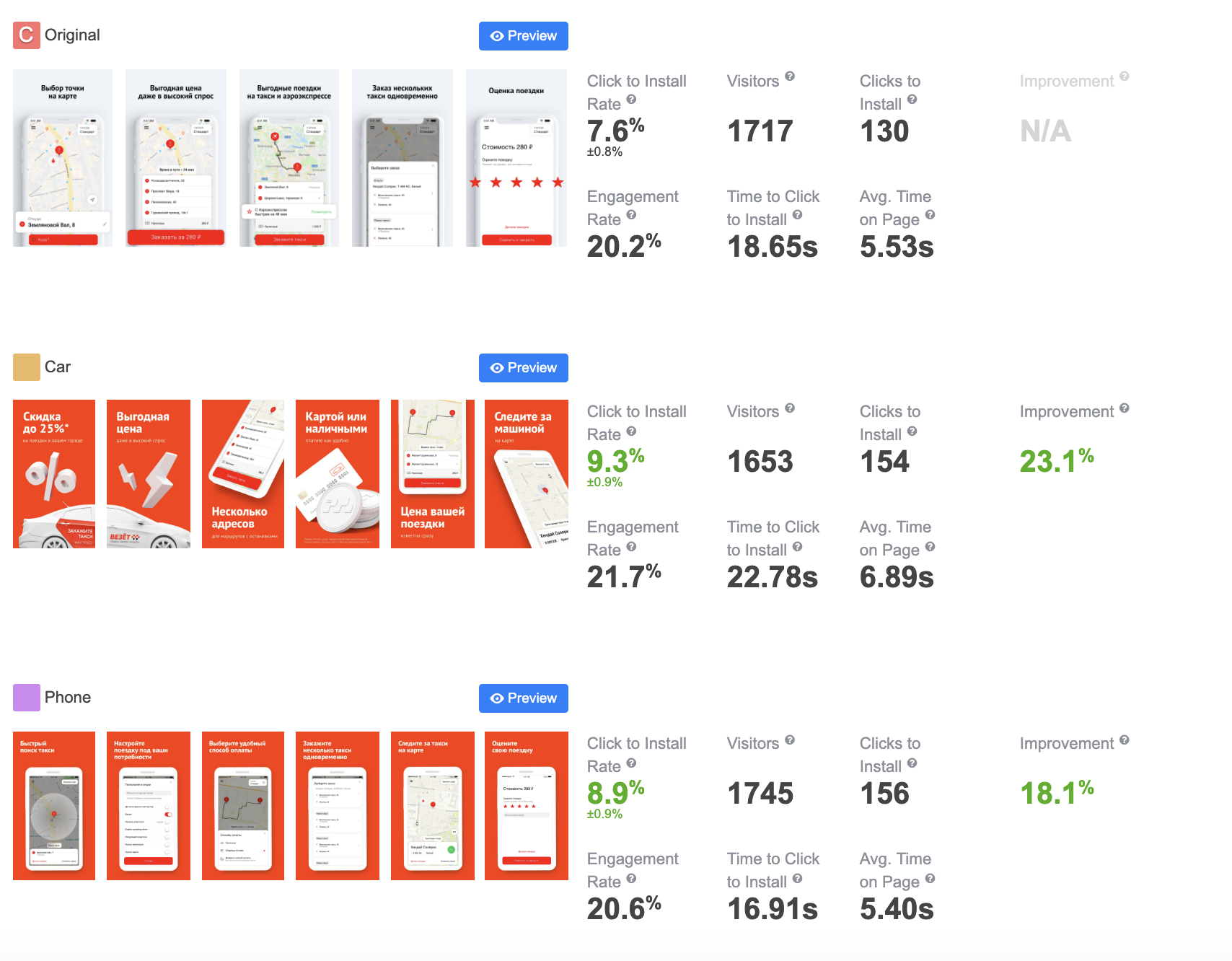

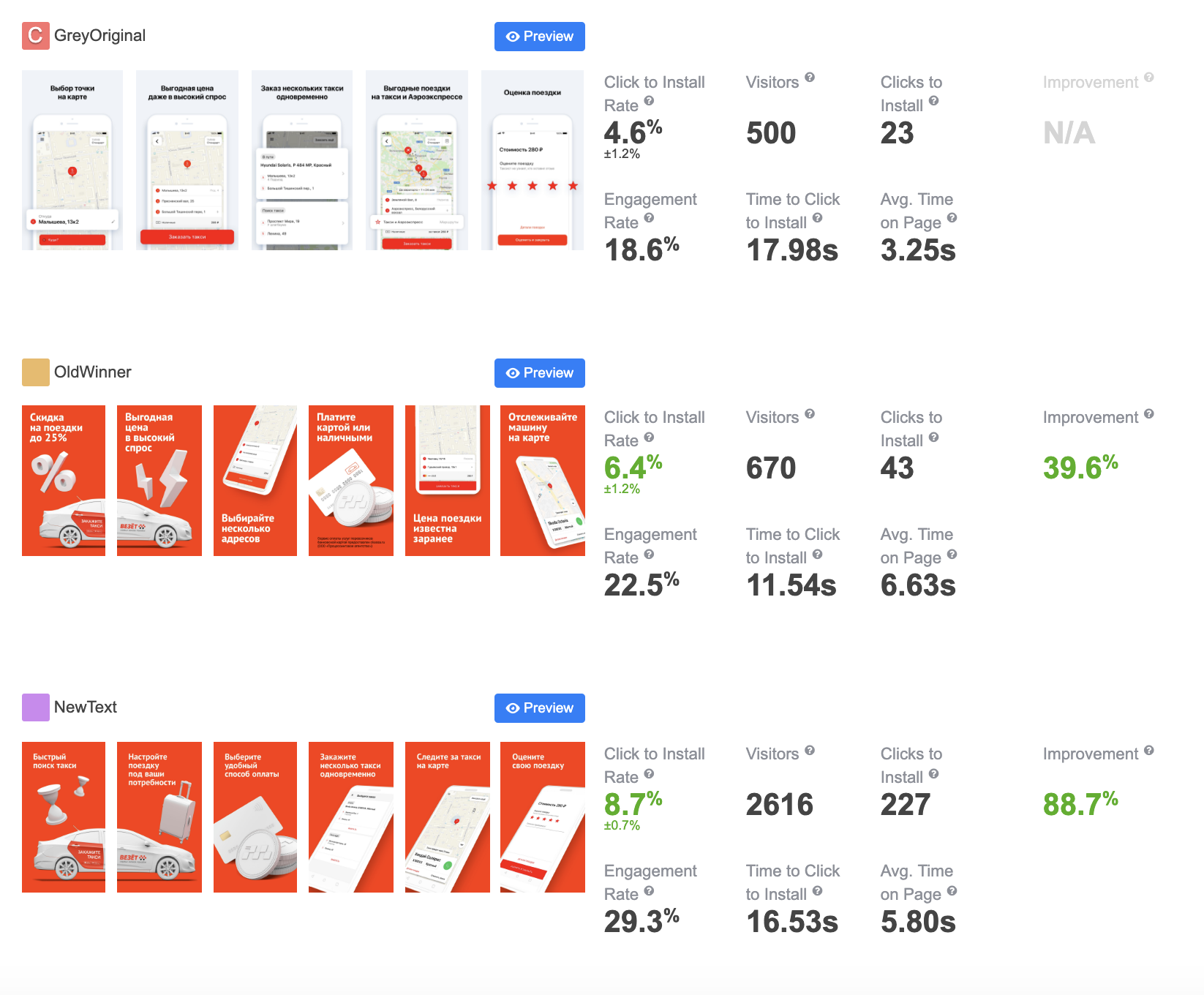

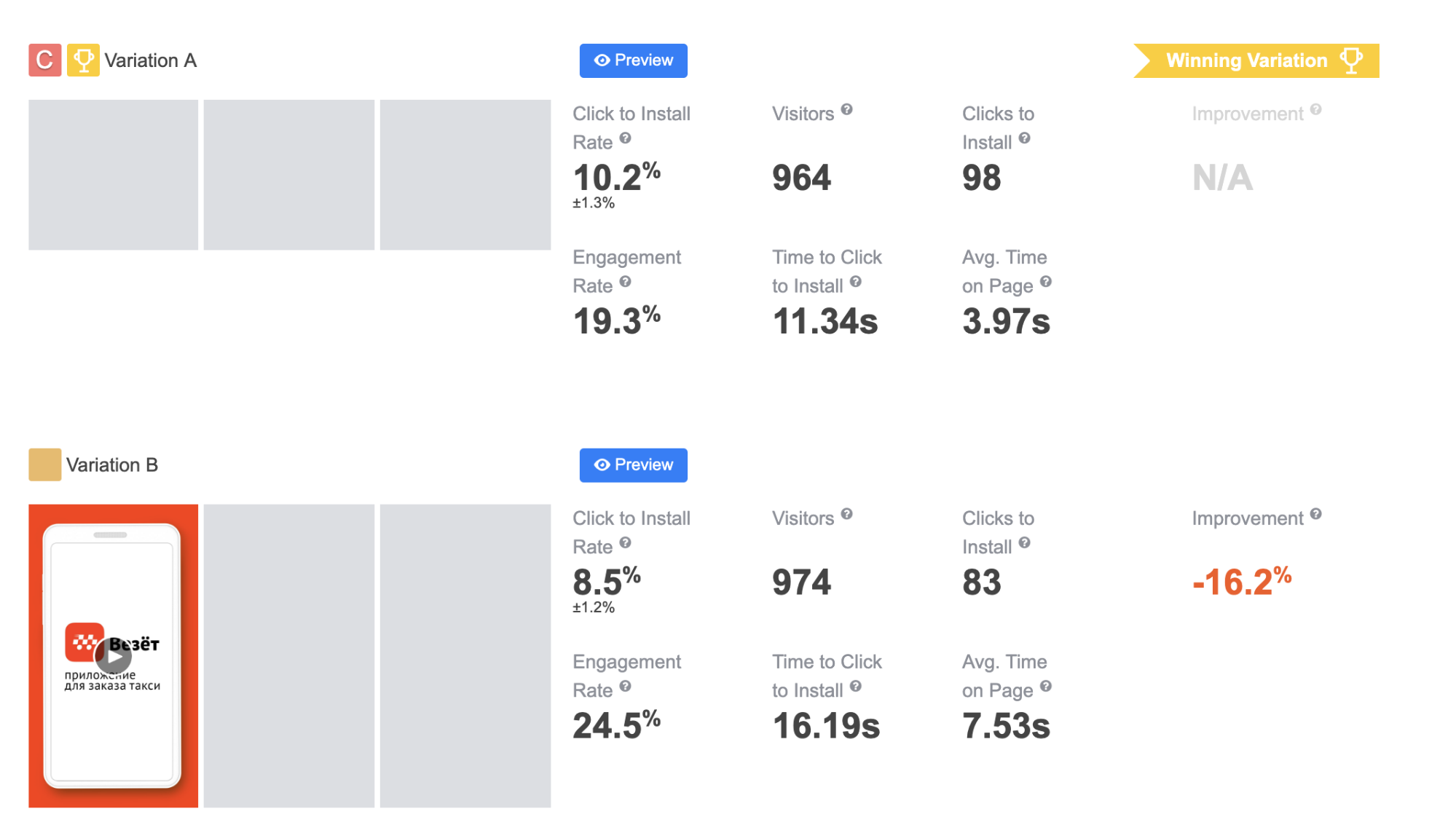

The initial test was conducted with similar options as in Google Play Console, and the test version with a car also won.

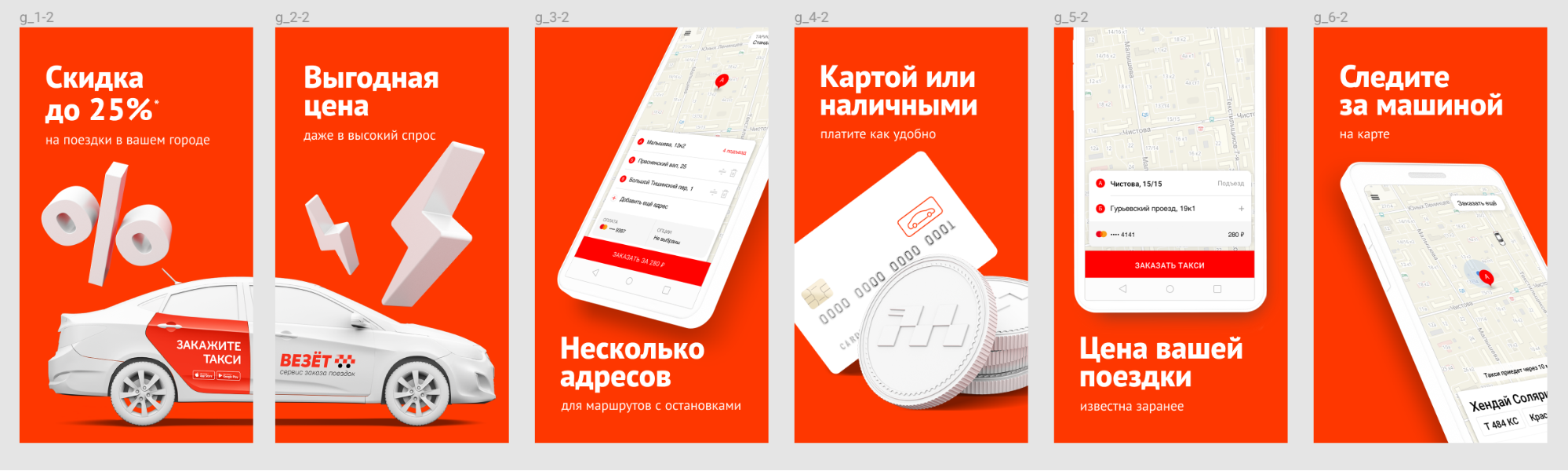

Variation with a car

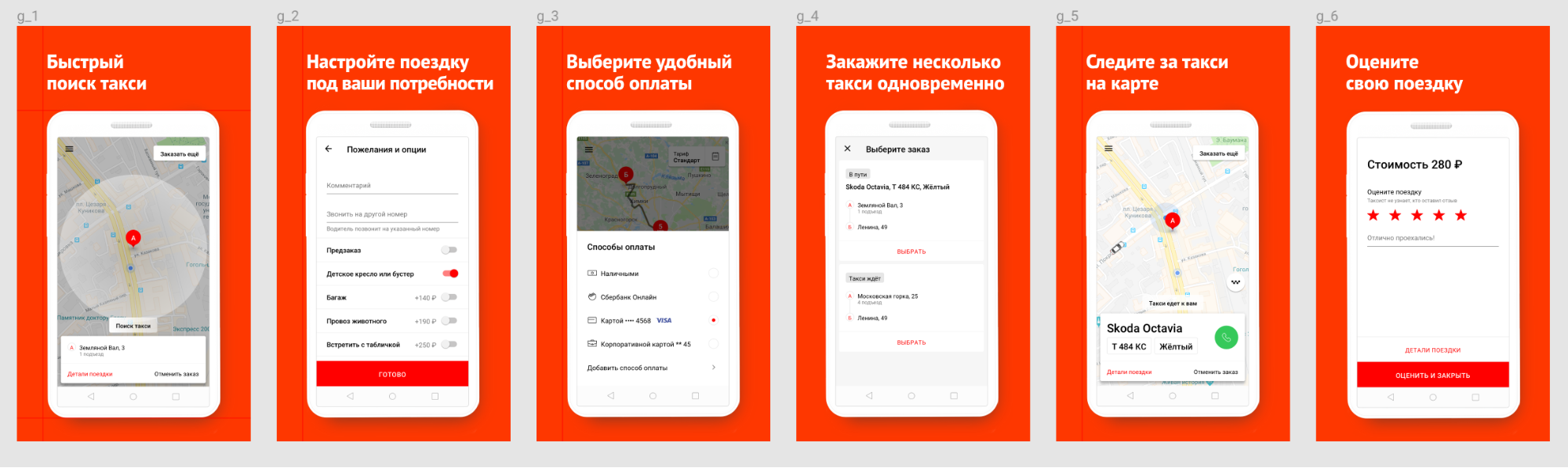

Variation with a phone

But the difference was too small, so we decided to conduct another set of experiments. We compared our standard visuals with the same ones, but with the only modification: all font sizes were enlarged.

Then we started to test combinations of previously tested variants. We took the usability slogans from the first test and added them to the visuals of the current winner. The new version won. CTI rate was higher by 2.7% compared to the previous winner and higher by 4.1% compared to the current version.

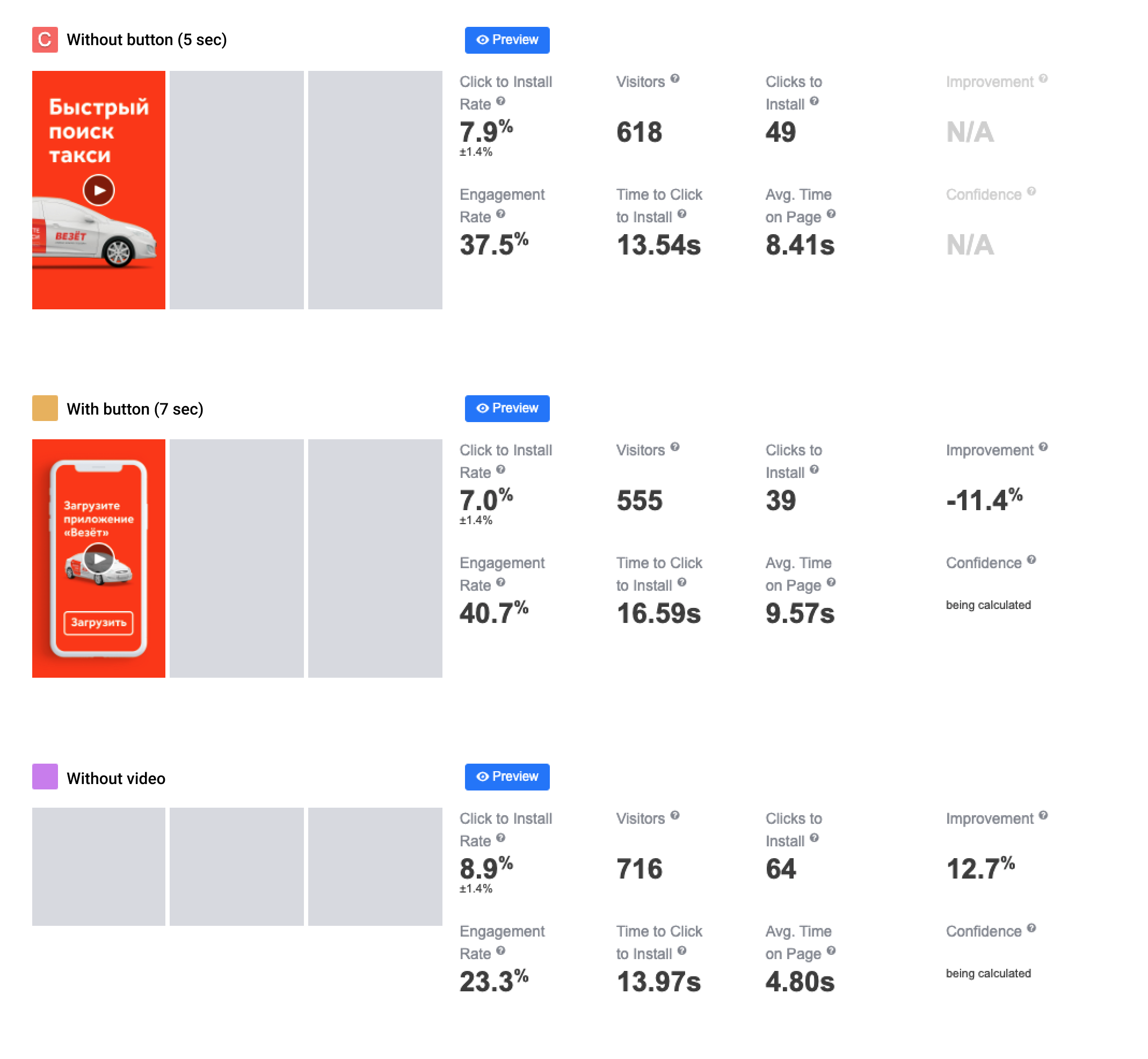

We looked at how our competitors were doing with videos but found nothing interesting. So, we needed to test videos and make decisions based on app store A/B testing experiments. We first developed a simple screencast that demonstrated the advantages of our app. It had a neutral or even negative (according to the SplitMetrics platform) effect on CTI rate.

Later, we tried again to use the videos with the “animated screenshots” concept (first two screenshots were animated). We also shortened the length of the videos from 15 to 5 seconds and made other versions with and without CTA.

Video is definitely not necessary for us. Editor’s note: videos are not always good for conversion. See tip #5.

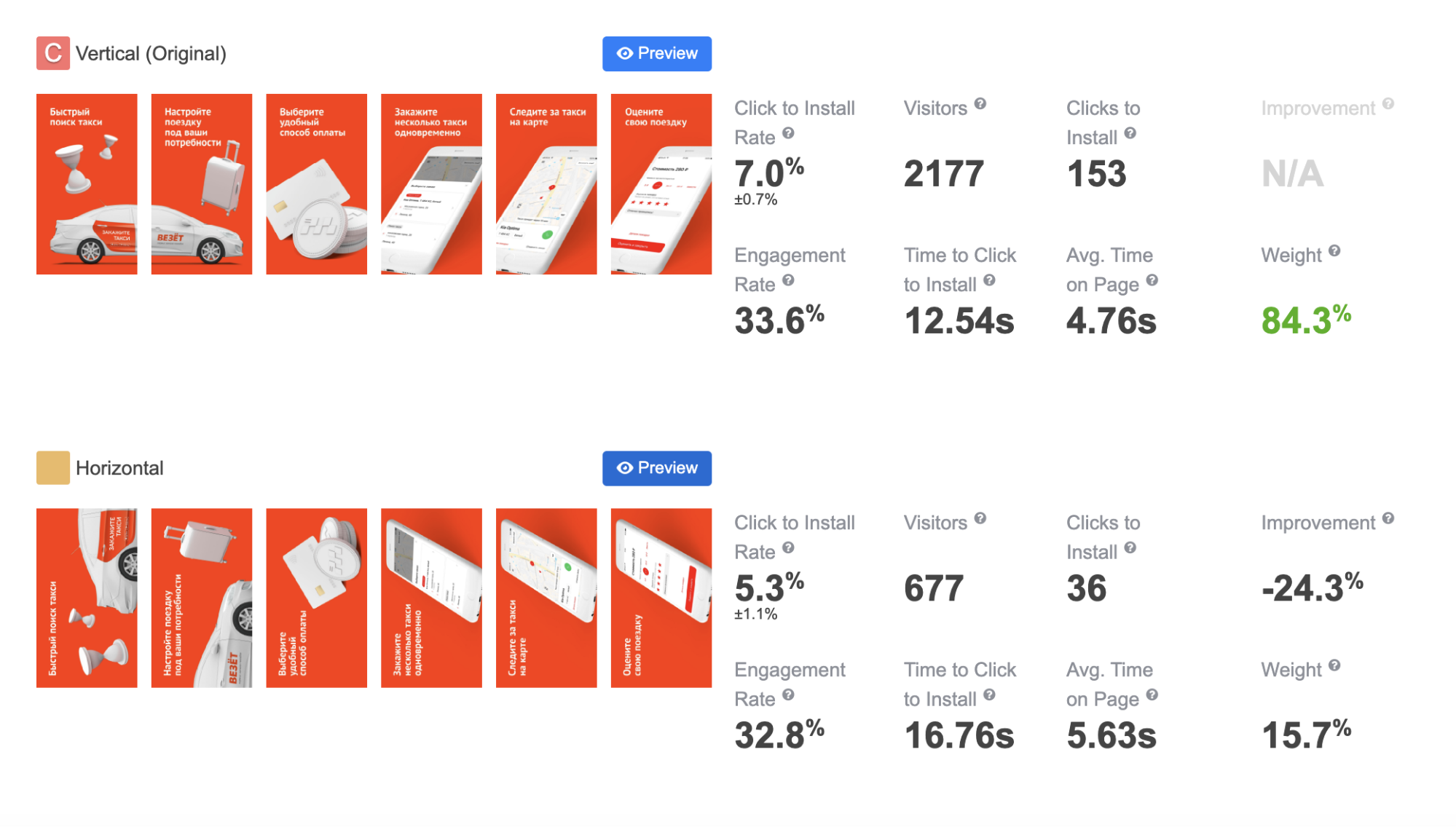

We noticed that many of our colleagues started to switch to landscape screenshots in the app stores. We developed landscape screenshots with the same content as the portrait screenshots we were using. And here is what experiments showed.

Our landscape screenshots performed even worse in the App Store.

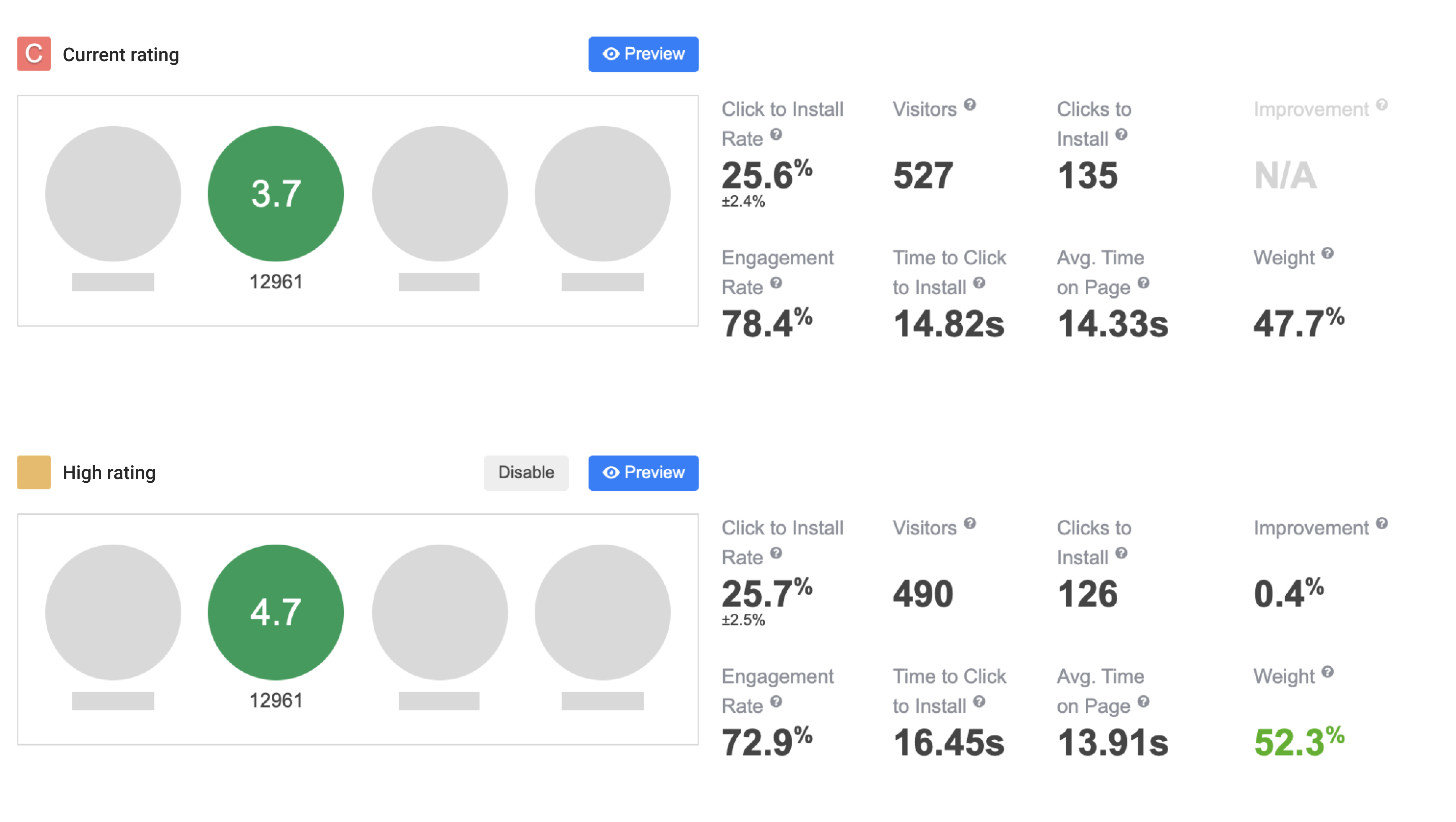

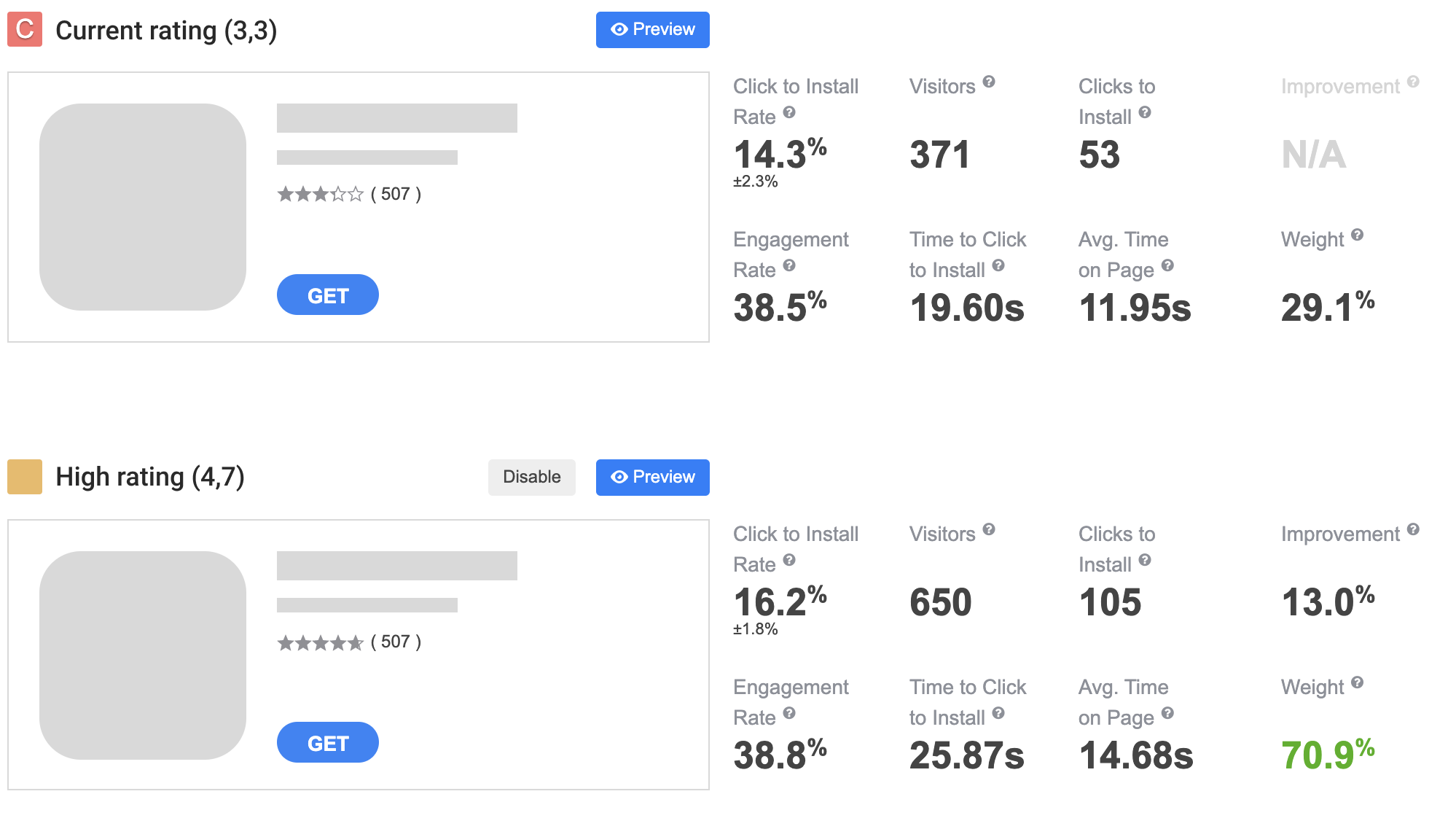

We once had problems with the rating in app stores, so we decided to test if we can improve CTI rates with different ratings. We used SplitMetrics for that, even for the Android app, since rating experiments are not available on Google Play Console.

Google Play rating test results – SplitMetrics

So, Apple users are more sensitive to app ratings. Well, we are constantly working on improving the service quality and app’s performance.